In the world of software engineering, we obsess over programming languages: their syntax, their type systems, their performance characteristics, their paradigms. We debate Rust versus Go, Python's readability versus C++'s control, Haskell's purity versus JavaScript's chaos. Yet we rarely acknowledge the elephant in the room — or rather, the language we all use every day that makes every other language look like a model of crystalline precision: English.

English is, without question, the worst programming language ever created. It is not merely flawed; it is fundamentally unsuited for the reliable transmission of complex ideas. Anyone who has ever tried to communicate a non-trivial technical concept to another human being knows this instinctively. The ambiguities, the contextual dependencies, the endless opportunities for misinterpretation — English excels at all of them.

If you have spent any time in meetings, code reviews, specification documents, or even casual Slack threads, you have felt the pain. And if you have any background in mathematics, physics, or formal logic, the contrast becomes almost comically stark.

Precision: The Foundation of Reliable Computation (and Communication)

At its core, a good programming language is a formal system designed for unambiguous execution. Every valid program has exactly one meaning under the language's semantics. A compiler or interpreter will produce the same behavior every time, assuming the same inputs and environment. This is not an accident; it is the entire point.

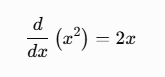

Mathematics and physics adopted formal languages for the same reason. When Newton or Leibniz developed calculus, they didn't just wave their hands and say "the rate of change, you know, kinda like speed but for curves." They created notation — symbols, rules of manipulation, axioms — that allowed precise statements like:

This expression has one, and only one, interpretation among those who understand the formal system. There is no room for "well, depending on what you mean by derivative" or "in my experience, sometimes it's 2x plus or minus a bit."

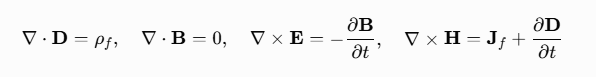

Physics follows suit. Maxwell's equations in differential form are not poetic suggestions:

These are not open to "opinions-based" interpretation. Misunderstand a sign or a vector component, and your electromagnetic device will quite literally fail to function. Engineers and physicists spend years learning to think within these formalisms precisely because natural language is too sloppy for the phenomena they describe.

Formal languages exist because human natural languages are terrible at edge cases, scope, and unintended interpretations. We invented predicate logic, set theory, lambda calculus, and programming languages to escape the swamp of ambiguity.

English, by contrast, is the swamp.

The Many Ways English Fails as a Specification Language

Consider something as seemingly simple as a requirement: "The system shall process transactions quickly."

What does "quickly" mean? Under 100ms? Under 500ms? Does it depend on load? On hardware? On network conditions? Is this a hard guarantee or a soft target? English offers no mechanism to distinguish these.

Now imagine trying to implement a sorting algorithm based on an English description:

"Sort the list in ascending order, but put the most important items first, unless they're duplicates, in which case keep the original order for those."

Good luck. Is "important" defined? What constitutes a duplicate? Does "original order" refer to stable sort semantics? English leaves all of this to intuition, shared context, and — inevitably — arguments in code review.

Real-world examples abound in software projects:

- "Handle errors gracefully" — Does this mean log them? Retry? Notify the user? Crash the process? Return a default value?

- "Make the UI intuitive" — Intuitive to whom? A power user? A complete novice? Someone from a different cultural background?

- "The cache should be consistent" — Eventual consistency? Strong consistency? What about partition tolerance?

These are not edge cases. They are the norm. Experienced engineers learn to translate vague English requirements into precise specifications, often using formal tools (user stories with acceptance criteria, UML, TLA+, property-based testing) precisely because English alone is insufficient.

Ambiguity at Every Level

English is ambiguous at the lexical, syntactic, semantic, and pragmatic levels.

Lexical ambiguity (multiple meanings for the same word): "Bank" can mean a financial institution, the side of a river, or to rely on something. Context usually helps, but in technical documents, context can itself be contested.

Syntactic ambiguity (parsing issues): Classic example — "I saw the man with the telescope." Did I use the telescope to see the man, or did the man have the telescope? Programming languages avoid this with strict grammar rules and operator precedence.

Semantic ambiguity: "The function should return the largest value." Largest by what metric? In what ordering? For floating-point numbers, what about NaN or signed zeros?

Pragmatic ambiguity: What is left unsaid. In a conversation between colleagues who have worked together for years, massive amounts of information are implied. New team members, or an AI trying to implement from a spec, have no access to that shared mental model.

Compare this to Python, often praised for readability:

def process_data(data: List[float]) -> Optional[float]:

if not data:

return None

return max(data)

Even without types, the behavior is unambiguous to anyone who knows Python's semantics. max([]) raises an exception, but here we explicitly handle the empty case. The return type hint further constrains expectations.

English has no equivalent of type hints, no compiler to catch inconsistencies, no unit tests for prose.

Why We Put Up With It (And Why We Shouldn't)

English has undeniable strengths. It is expressive, flexible, and culturally rich. It can convey emotion, nuance, humor, and poetry in ways that formal languages cannot. Shakespeare didn't write in lambda calculus for a reason.

But when the goal is reliable transmission of executable ideas — specifications, APIs, requirements, designs — those strengths become liabilities. Flexibility is another word for underspecification.

Anyone with real experience communicating complex ideas knows the pattern:

- Write what seems like a clear English description.

- Send it to a colleague.

- Receive back an implementation that is technically correct but completely misses the intent.

- Spend hours in discussion clarifying what was "obviously" meant.

- Repeat.

This is why mature software organizations invest heavily in:

- Precise requirements documents with measurable acceptance criteria

- Formal methods (where the cost justifies it — e.g., aerospace, finance, medical devices)

- Contract testing, property-based testing, and exhaustive specification

- Code as the single source of truth, with comments used sparingly

The very existence of these practices is an admission that English is inadequate.

In physics and mathematics, we don't tolerate "it's approximately true, in the usual sense." We define terms rigorously. We prove theorems. We build models whose predictions can be falsified experimentally. The precision of the language enables the precision of thought and the reliability of results.

Programming aspires to the same rigor. That's why we have static type systems, formal verification tools like Coq or Lean, and languages with strong guarantees (memory safety, data-race freedom, etc.).

English remains the worst because it resists all such discipline. It evolves organically, embraces exceptions, and thrives on interpretation. It is a living, messy, human thing — wonderful for literature, conversation, and persuasion; disastrous for programming.

We don't need to abandon English. We need to recognize its limitations and use better tools where precision matters.

Next time you write a technical specification, ask yourself: Could this be misinterpreted in a way that leads to incorrect behavior? If the answer is yes (and it almost always is), add precision — through examples, edge cases, invariants, or even pseudocode.

Treat English as a high-level, lossy compilation target rather than the final executable specification.

Because in the end, the computer doesn't care about your opinions, your intent, or what "everyone knows" you meant. It executes precisely what you told it — in a real programming language.

And humans, despite our intelligence, are not much better than computers when the specification is written in the worst programming language of all: English.

We just hide our bugs better, and argue about them longer.