<!--

SEO Title: Kubernetes HPA Best Practices: When CPU Works, Why Memory Almost Never Does

Meta Description: Stop blindly setting 70% CPU/memory HPA thresholds. Learn when memory-based HPA breaks, why idle workloads scale up, and what metrics to use instead.

Focus Keywords: kubernetes hpa best practices,kubernetes memory hpa,hpa cpu vs memory kubernetes,horizontal pod autoscaler best practices

Slug: kubernetes-hpa-best-practices

-->

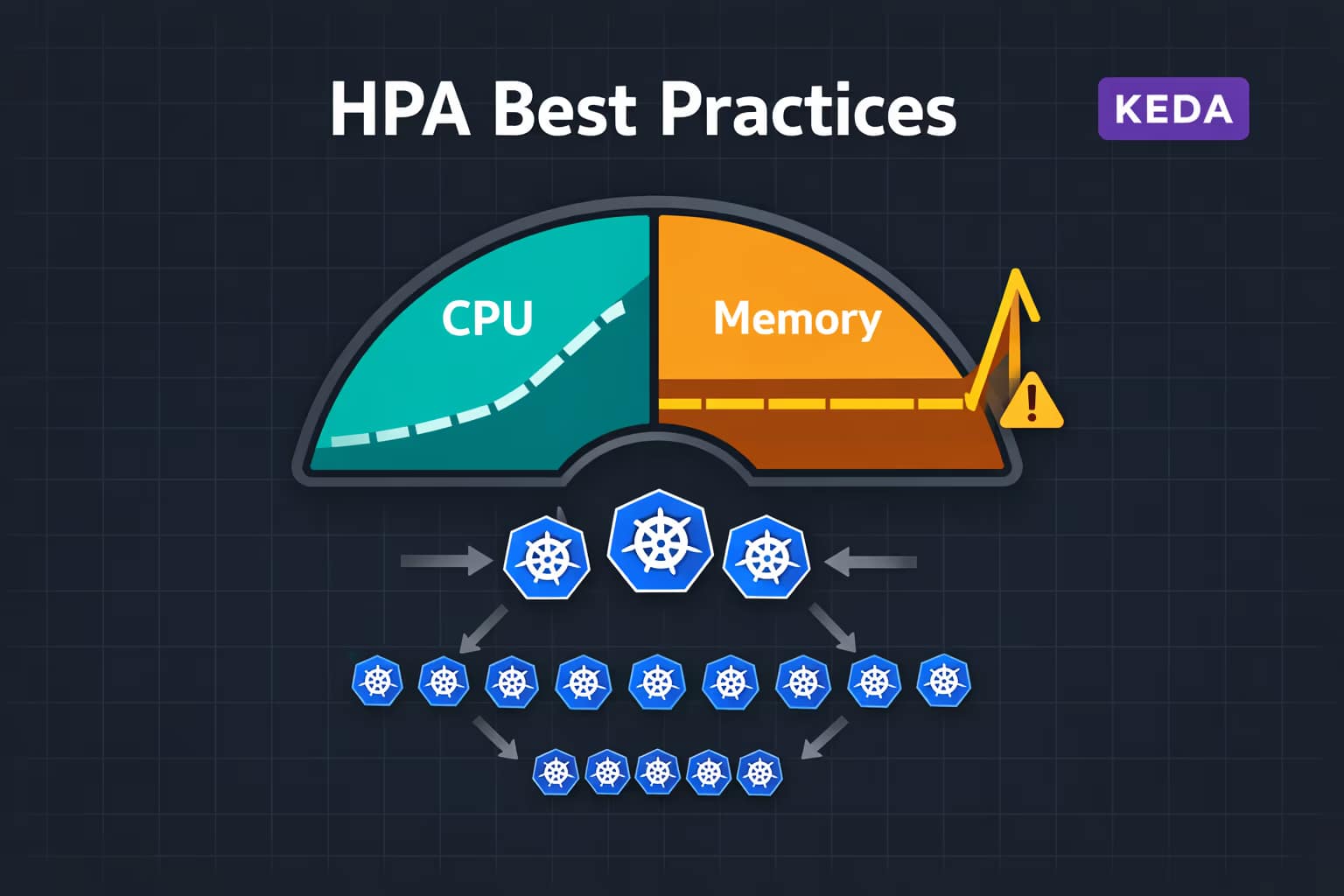

There is a configuration that appears in virtually every Kubernetes cluster: a HorizontalPodAutoscaler targeting 70% CPU utilization and 70% memory utilization. It looks reasonable. It follows the examples in the official documentation. And in many cases, it silently causes more harm than good.

The problems surface in predictable ways: workloads that do nothing get scaled up because their memory footprint is naturally high. Latency-sensitive APIs scale too slowly because the CPU spike is already over by the time new pods are ready. Batch jobs oscillate between scaling up and down during normal operation. And teams spend hours debugging autoscaling behavior that should have been straightforward.

This article is about understanding why the default HPA configuration fails, under which exact conditions memory-based HPA is appropriate (and when it is not), and what alternative metrics — custom metrics, event-driven triggers, and external signals — produce autoscaling behavior that actually matches workload demand.

How HPA Actually Decides to Scale

Before diagnosing the problems, it is worth understanding the mechanics precisely. HPA computes a desired replica count using this formula:

desiredReplicas = ceil(currentReplicas × (currentMetricValue / desiredMetricValue))

For a CPU target of 70%, with 2 replicas currently consuming an average of 140% of their CPU request, HPA computes ceil(2 × (140 / 70)) = 4 replicas. This is conceptually simple but has a critical dependency that most configurations ignore: the metric value is expressed relative to the resource request, not the resource limit.

This distinction is fundamental to understanding every failure mode that follows. If a container has a CPU request of 100m and a limit of 2000m, and it is currently consuming 80m, HPA sees 80% utilization — even though the container is using only 4% of its allowed ceiling. Set an HPA threshold of 70% on a container with a CPU request of 100m and any nontrivial workload will trigger scaling immediately.

The HPA controller polls metrics every 15 seconds by default (--horizontal-pod-autoscaler-sync-period). Scale-up happens quickly — within one to three polling cycles when the threshold is consistently exceeded. Scale-down is deliberately slow: by default the controller waits 5 minutes (--horizontal-pod-autoscaler-downscale-stabilization) before reducing replicas, to avoid thrashing. This asymmetry matters when debugging oscillation.

CPU-Based HPA: When It Works and When It Doesn’t

CPU is a compressible resource. When a container hits its CPU limit, the kernel throttles it — the process slows down but does not crash or get evicted. This property makes CPU a reasonable proxy for load in many, but not all, scenarios.

Where CPU HPA Works Well

Stateless request-processing workloads are the sweet spot for CPU-based HPA. If your service does CPU-bound work per request — REST APIs performing data transformation, compute-heavy business logic, image processing — then CPU utilization correlates strongly with request volume. More requests means more CPU consumed, which means HPA adds replicas, which distributes the load.

The key prerequisites for CPU HPA to work correctly are:

- Accurate CPU requests. Set requests to the actual sustained consumption of the workload under normal load, not a low placeholder. Use VPA in recommendation mode or historical Prometheus data to right-size requests before enabling HPA.

- Reasonable request-to-limit ratio. A ratio of 1:4 or less keeps HPA thresholds meaningful. A container with request 100m and limit 4000m makes percentage-based thresholds nearly useless.

- CPU consumption that tracks user load linearly. If your service does CPU-heavy background work independent of incoming requests, CPU utilization will trigger scaling regardless of actual demand.

Where CPU HPA Fails

Latency-sensitive services with sharp traffic spikes. HPA reacts to average CPU utilization measured over the polling window. For a service that handles traffic bursts — a flash sale, a cron-triggered batch of API calls, a notification broadcast — by the time the HPA controller detects the spike, queues new pods, and those pods pass readiness checks, the burst may already be over. The result is replicas added after the damage is done, with the added cost of a scale-down cycle afterward.

I/O-bound workloads. A service that spends most of its time waiting on database queries, external API calls, or message queue reads will show low CPU utilization even under heavy load. HPA will not add replicas while the service is degraded — it sees idle CPUs while goroutines or threads are blocked waiting on I/O.

Workloads with cold-start costs. If a new replica takes 30-60 seconds to warm up (loading ML models, establishing connection pools, populating caches), scaling decisions need to happen earlier — before CPU peaks — not in reaction to it.

Memory-Based HPA: Why It Almost Always Breaks

Memory is an incompressible resource. Unlike CPU — which can be throttled without killing a process — when a container exhausts its memory limit, the OOM killer terminates it. This single property cascades into a set of fundamental problems with using memory as an HPA trigger.

The Core Problem: Memory Doesn’t Naturally Correlate With Load

For most well-architected services, memory consumption is relatively stable. A Go service allocates memory at startup for its runtime structures, connection pools, and caches — and then maintains roughly that footprint regardless of traffic. A JVM application allocates a heap at startup and uses garbage collection to manage it. In both cases, memory usage under 10 requests per second and under 10,000 requests per second may be nearly identical.

This means a memory-based HPA with a 70% threshold will either:

- Never trigger, because the workload’s memory is stable and always below the threshold — rendering the HPA useless.

- Always trigger, because the workload’s baseline memory consumption is naturally above the threshold — causing the workload to scale out permanently and never scale back in.

Neither outcome corresponds to actual scaling need.

The Request Misconfiguration Trap

This is the failure mode the user mentioned, and it is the most common cause of “my workload scales up for no reason.” Consider a Java service that needs 512Mi of heap to run normally. The team sets memory request to 256Mi — too conservative, either to save cost or because the initial estimate was wrong. The service immediately consumes 200% of its memory request just by being alive. An HPA with a 70% memory target will scale this workload to maximum replicas within minutes of deployment, and it will stay there forever.

The fix is never “adjust the HPA threshold.” The fix is right-sizing the memory request. But this reveals the deeper issue: memory-based HPA is extremely sensitive to the accuracy of your resource requests, and most teams do not have accurate requests — especially for newer workloads or after code changes that alter memory footprint.

JVM and Go Runtime Memory Behavior

JVM workloads are particularly problematic. By default, the JVM allocates heap up to a maximum (-Xmx) and then holds that memory — it does not release heap back to the OS aggressively, even after garbage collection. A JVM service that handles one request per hour will show nearly the same memory footprint as one handling thousands of requests per minute. Furthermore, the JVM’s garbage collector introduces memory spikes during collection cycles that are unrelated to load.

In containerized JVM environments, you also need to account for the container memory limit aware flag (-XX:+UseContainerSupport, enabled by default since JDK 11) which affects how the JVM calculates its heap ceiling relative to the container limit. Without proper tuning, the JVM may allocate a heap that fills 80-90% of the container’s memory limit — immediately triggering any memory-based HPA.

Go workloads behave differently but also poorly with memory HPA. Go’s garbage collector is designed to maintain low latency rather than minimal memory use. The runtime may hold memory above what is strictly needed, and the memory footprint can vary based on GC tuning parameters (GOGC, GOMEMLIMIT) in ways that are not correlated with incoming request load.

When Memory HPA Is Actually Appropriate

There are narrow cases where memory-based HPA makes sense:

- Workloads where memory consumption genuinely tracks with load linearly. Some data processing pipelines, in-memory caches that grow with request volume, or streaming applications that buffer data proportionally to throughput. If you can demonstrate from metrics that memory and load have a strong linear correlation, memory HPA is defensible.

- As a safety valve alongside CPU HPA. Using memory as a secondary metric (not primary) to protect against memory leaks or runaway allocations in a service that normally scales on CPU. In this case, set the memory threshold high — 85-90% — so it only triggers in genuine overconsumption scenarios.

- Caching services where eviction is not desirable. If a service uses memory as a performance cache and you want to scale out before memory pressure causes cache eviction, memory utilization can be a useful trigger — provided requests are accurately sized.

Outside these specific cases, removing memory from your HPA spec and relying on the signals below will produce better behavior in virtually every scenario.

Right-Sizing Requests Before You Add HPA

No HPA strategy works correctly without accurate resource requests. Before adding any autoscaler — CPU, memory, or custom metrics — run your workload under representative load and measure actual consumption. The easiest way to do this is with VPA in recommendation mode:

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: my-service-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: my-service

updatePolicy:

updateMode: "Off" # Recommendation only — don't auto-apply

After 24-48 hours of traffic, check the VPA recommendations:

kubectl describe vpa my-service-vpa

The lowerBound, target, and upperBound values give you a data-driven baseline for setting requests. Set your requests at or near the VPA target value before configuring HPA. This single step eliminates the most common cause of HPA misbehavior.

Note that VPA and HPA cannot both manage the same resource metric simultaneously. If VPA is set to auto-update CPU or memory, and HPA is also scaling on those metrics, the two controllers will fight each other. The safe combination is: HPA on CPU/memory + VPA in recommendation-only mode, or HPA on custom metrics + VPA on CPU/memory in auto mode. See the Kubernetes VPA guide for the full details.

Better Signals: What to Scale On Instead

The fundamental shift is moving from resource consumption metrics (which describe the past) to demand metrics (which describe what the workload is being asked to do right now or will be asked to do in seconds).

Requests Per Second (RPS)

For HTTP services, requests per second per replica is usually the most accurate proxy for load. Unlike CPU, it measures demand directly — not a side-effect of demand. An HPA that maintains 500 RPS per replica will scale predictably as traffic grows, regardless of whether the service is CPU-bound, memory-bound, or I/O-bound.

RPS is available as a custom metric from your service mesh (Istio exposes it as istio_requests_total), from your ingress controller (NGINX exposes request rates via Prometheus), or from your application’s own Prometheus metrics. Configuring HPA on custom metrics requires the Prometheus Adapter or a compatible custom metrics API implementation.

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: my-service-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: my-service

minReplicas: 2

maxReplicas: 20

metrics:

- type: Pods

pods:

metric:

name: http_requests_per_second

target:

type: AverageValue

averageValue: "500" # 500 RPS per replica

Queue Depth and Lag

For consumer workloads — services reading from Kafka, RabbitMQ, SQS, or any message queue — the right scaling signal is consumer lag: how many messages are waiting to be processed. A lag of zero means consumers are keeping up; a growing lag means you need more consumers.

CPU will not give you this signal reliably. A consumer blocked on a slow database write will show low CPU but growing lag. An idle consumer will show low CPU even if the queue contains millions of unprocessed messages. Scaling on lag directly solves both problems.

This is precisely the use case that KEDA was built for. KEDA’s Kafka scaler, for example, reads consumer group lag directly and scales replicas to maintain a configurable lag threshold — no custom metrics pipeline required.

Latency

P99 latency per replica is an excellent scaling signal for latency-sensitive services. If your SLO is a 200ms P99 response time and latency starts climbing toward 400ms, that is a direct signal that the service is overloaded — regardless of what CPU or memory shows.

Latency-based autoscaling requires custom metrics from your service mesh or APM tool, but the added complexity is often justified for user-facing APIs where latency directly impacts experience.

Scheduled and Predictive Scaling

For workloads with predictable traffic patterns — business-hours services, weekly batch jobs, end-of-month processing peaks — proactive scaling outperforms reactive scaling by definition. Rather than waiting for CPU to spike and then scrambling to add replicas, you pre-scale before the expected load increase.

KEDA’s Cron scaler enables this pattern declaratively, defining scale rules based on time windows rather than observed metrics.

HPA Configuration Best Practices

Always Set minReplicas ≥ 2 for Production

A minReplicas: 1 HPA means your service has a single point of failure during scale-in events. When HPA scales down to 1 replica and that pod is evicted for node maintenance, your service has zero available instances for the duration of the new pod’s startup time. For any production workload, set minReplicas: 2 as a baseline.

Tune Stabilization Windows

The default 5-minute scale-down stabilization window is too aggressive for many workloads. A service that processes jobs in 3-minute batches will show a predictable CPU trough between batches — HPA will attempt to scale down, only to scale back up when the next batch arrives. Increase the stabilization window to match your workload’s natural cycle:

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: my-service-hpa

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: my-service

minReplicas: 2

maxReplicas: 20

metrics:

- type: Resource

resource:

name: cpu

target:

type: Utilization

averageUtilization: 60

behavior:

scaleDown:

stabilizationWindowSeconds: 600 # 10 minutes

policies:

- type: Percent

value: 25 # Scale down max 25% of replicas at once

periodSeconds: 60

scaleUp:

stabilizationWindowSeconds: 0 # Scale up immediately

policies:

- type: Percent

value: 100

periodSeconds: 30

The behavior block (available in HPA v2, GA since Kubernetes 1.23) gives you independent control over scale-up and scale-down behavior. Aggressive scale-up with conservative scale-down is the right default for most production services.